The Spark

If you’ve ever sold anything online, you know this pain:

You scroll past an Apple ad. That minimalist perfection. That premium feel. You want the same aesthetic for your product.

So you start reverse-engineering — what background color is that? Where’s the light coming from? What font is that? What’s the letter spacing?

Three hours later, you’ve Photoshopped something that “kind of works.” But side by side? Yours looks like a knockoff.

And here’s the real kicker: if you copy too closely, you’re risking copyright infringement.

I’ve watched countless e-commerce sellers trapped in this cycle:

-

No budget for professional designers

-

No time to master professional software

-

No ability to translate “I want that vibe” into actionable design specs

-

Copy too much and face legal risk; copy too little and it looks amateur

This problem nagged at me for months. Until I realized: the answer isn’t copying elements — it’s learning principles.

What I Built

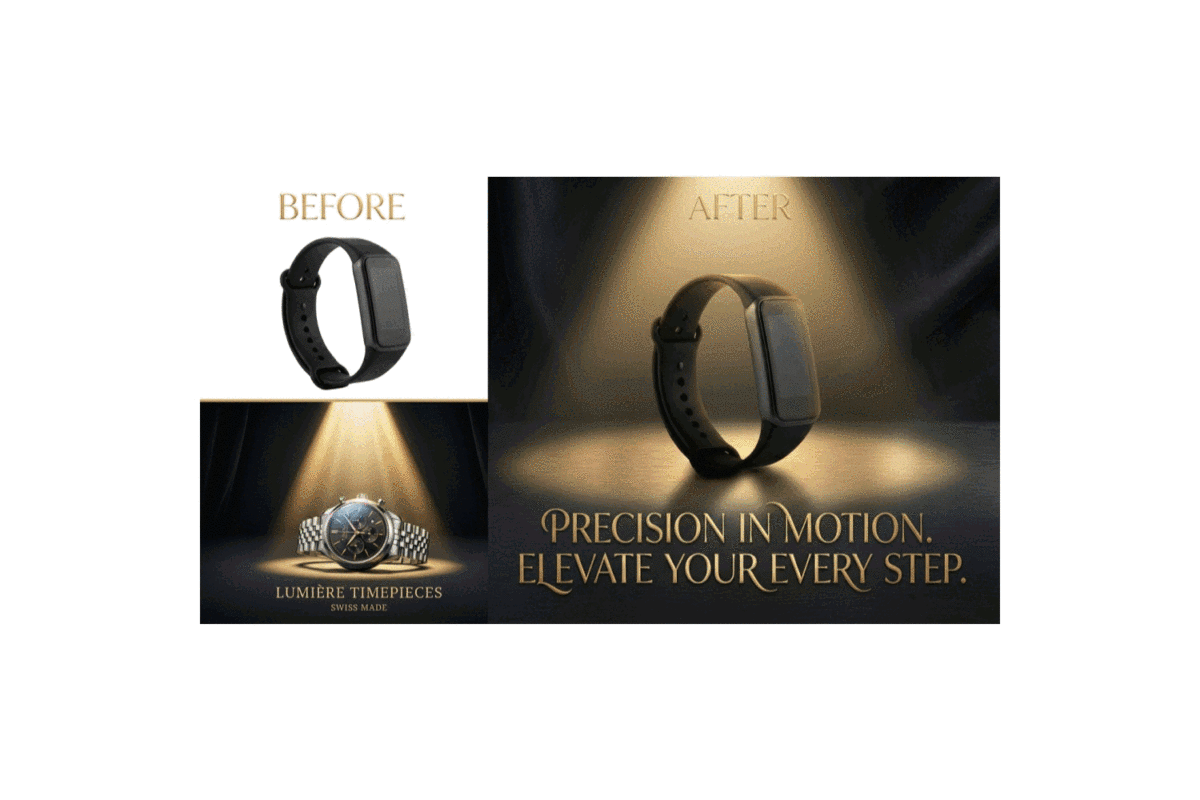

VibeMimic is an AI-powered “style inspiration” tool. It doesn’t help you plagiarize ads — it helps you understand the visual principles behind successful ads, then creates an entirely original marketing image for your product in that inspired style.

Try it: mulerun.com/@LinkAIBrain/VibeMimic

The workflow is simple:

| You Upload | AI Learns | You Get |

|---|---|---|

| Product photo + Reference ad | Mood, colors, composition, lighting, typography style | Original marketing image + Artistic slogan |

Here’s the critical distinction:

Traditional approach (risky): Extract the background from an Apple ad → Swap in your product → This is infringement

VibeMimic (safe): AI learns “minimalist, white background, centered composition, soft lighting” → Creates a completely new image for your product → This is inspiration

One is copy-paste. The other is learn-and-create.

Who This Is For

I built VibeMimic for people fighting daily battles against “my product photos aren’t good enough.”

E-commerce sellers — Want premium visuals but can’t afford designers. Your main image determines click-through rates. VibeMimic gives everyday products luxury-brand aesthetics.

Small brand owners — See competitors with great ad visuals, want to borrow the style but worry about copyright. VibeMimic lets you safely “learn from” instead of “copy.”

Cross-border sellers — Same product, different markets. VibeMimic supports any language you specify — AI generates native-quality copy, not machine translations.

Content creators — Need to rapidly produce consistent-style social media content without redesigning each image from scratch.

The common thread? They need professional results without professional prerequisites.

How I Built It

The technical architecture is deceptively simple:

The entire flow requires just one API call.

The real magic lives in prompt engineering. My carefully crafted prompt guides the AI to:

-

Analyze the reference image’s visual principles — Not “what is this image” but “why does this image work”

-

Extract abstract design principles — Mood, color theory, composition approach, lighting technique

-

Create entirely new backgrounds and compositions — Similar feeling inspired by reference, completely original elements

-

Generate or translate slogans — Rendered as artistic typography, not plain text overlay

-

Output 2K commercial-grade images — Ready for immediate use on e-commerce platforms

What Actually Worked

Three decisions made all the difference.

Positioning as “learning principles” rather than “copying elements.” From day one, I was explicit about VibeMimic’s value proposition: this is a tool that helps you understand design language, not a tool that helps you plagiarize. This positioning not only sidesteps copyright risks but helps users grasp the real value — they’re gaining reusable “style understanding,” not one-time asset replacement.

Supporting any language. Rather than limiting to preset options, I let users type whatever language they need. English, Chinese, Arabic, Vietnamese — AI generates native-quality copy in all of them. This made VibeMimic a truly global tool.

Artistic typography instead of plain text. Many similar tools just slap text on images — looks like PowerPoint. VibeMimic emphasizes “artistic typography” — handwritten scripts, elegant serifs, neon styles — making the slogan part of the design, not an afterthought.

The Mistakes I Almost Made

Wanting to support “direct image replacement.” Early users asked if they could just swap the product in the reference image with their own. Technically possible. But that turns the tool into an infringement machine. I stuck with “learn the style, create original work” and declined this “feature.”

Underestimating the importance of slogans. Version one treated slogans as optional — no input meant no text. But testing revealed images with slogans performed significantly better. So I added AI auto-generation — if users leave it blank, AI creates a tagline based on product features.

Ignoring the value of language localization. Initially I only supported English and Chinese. Then realized cross-border sellers need any language. Switching to “user-defined language” immediately expanded use cases.

Open Source: Nano Banana Pro Edit Demo Workflow

I’m sharing the core n8n workflow that powers VibeMimic’s image generation — Edit API calls, async polling, error handling, all of it. One JSON file. Import and go.

This isn’t the full VibeMimic — it’s a foundation template. Build your own creative applications on top: product photo enhancement, style transfer, ad generation — the underlying logic is the same.

![]() Grab it here:

Grab it here:

github.com/LinkAIBrain/mulerun-n8n-examples/…/NanoBananaPro-Image-Edit.json

Two seconds. Zero cost. ![]() Star the repo,

Star the repo, ![]() hit the heart on this article — it helps other builders discover these resources, and honestly, it keeps me motivated to open-source more.

hit the heart on this article — it helps other builders discover these resources, and honestly, it keeps me motivated to open-source more.

Quick Start

-

Download the JSON file

-

Import into n8n (Settings → Import from File)

-

Add your MuleRun API credentials

-

Upload two test images — one subject image, one reference image

-

Start iterating

If you’ve touched n8n before, you’ll feel at home immediately. If you haven’t, this makes a surprisingly good first project.

Why MuleRun Made This Possible

I built VibeMimic in one day. Not one week. Not one month. One day.

That timeline exists because MuleRun absorbed everything that wasn’t core to the product:

| Without MuleRun | With MuleRun |

|---|---|

| Rent and manage servers | |

| Integrate multiple model APIs | |

| Build payment infrastructure | |

| Handle user auth | |

| Monitor usage |

I spent my time on what actually matters: prompt engineering, user experience, use case refinement. The infrastructure? MuleRun made it invisible.

The Formula

VibeMimic isn’t revolutionary technology. It’s focused execution on a specific problem.

-

Find a creative pain point — E-commerce sellers want premium visuals but face skill barriers and copyright risks

-

Solve it elegantly — Learn style principles, create original work, generate in 30 seconds

-

Let infrastructure disappear — MuleRun handles everything that isn’t the product itself

This pattern repeats. There’s still so much friction in creative workflows waiting to be eliminated by AI — if someone builds the bridge.

Your AI agent is waiting to be built.

Start shipping. ![]()